I hate machine learning so much, to the point that I’m almost willing to consider bad arguments against them! But ideally let’s stick to good arguments against them, and see how far we can get.

I started hating ML from a gut feeling first, and had to come up with arguments and reasons later. Feels over reals. I think most people work this way without being aware of it. What the thinker thinks, the prover will prove.

Because I really do hate bad arguments even for a good cause.

Bad argument. I thought we hated copyright. Extending the definition of copyright beyond copying works to even include learning from looking at the work is beyond twisted. It’s anti-intellectual. “Close your eyes! Don’t learn! Don’t get inspired! Fester in stale water!” That can’t be the way.

Short-term argument. The models of today are not fully hooked up yet. They’re UI demos. I mean, yes, they do suck, all AI art is really ugly and all AI text is really dumb and all AI-programmed apps are really bad and buggy and AI is hallucinating more than Wikipedia.

Presently.

But if they stay this bad, we won’t need any arguments because they’ll collapse by themselves in dot-com 2.0, and if they become good, this argument isn’t gonna last us far.

A sub-argument of “they suck” that I see more and more is “they can’t do original things, they can’t be creative”. That one, I don’t buy. Plenty of books created with the same old boring twenty-six letters that still read like fresh new things. There are ways to mix A and B that end up nothing like either. Just like our own DNA. We’re all unique li’l creatures even though we were created through these stochastically combined carbon-based copy machines.

That sub-argument is a very bad argument because we’re already seeing new and fresh creations. Not necessarily good ones. This is a super flimsy ground to build your anti-ML case on since people can experience for their own selves how wildly creative these machines can be if you get off the super derivative prompts.

Super bad argument. Education adapted to cameras and calculators. If it weren’t for these other arguments against ML, education could adapt to it as a new tool, too.

They make us question what being human really is. So did cameras and movie cameras, but, that’s not necessarily a particularly fun fire to play with. As dismissive as I am of concerns about their use in education, I take this part seriously. The art of humanity struggled with those inventions and barely made it out on the other side OK. What is our purpose once we’ve automated every little dream away?

Pretty good argument, but we eventually did manage to survive cameras, and artists could use them, leverage them. Arguably the birth of realist painting and the enlightenment was due to the invention of optical devices and their adaptation by painters like da Vinci and Vermeer.

Personally, yeah, it felt like a super gut punch. Compared to other artists I felt like my journey towards becoming good took a lot longer than most, but I didn’t give up and I finally got there and became able to draw and paint well. I spent 20 years on what would’ve taken many other artists a few years (they’d either get good or give up). And then within one year of me finally feeling like I know how to do it, along comes Dall-E. FML.

A.k.a. bots will take our jobs.

Obviously, research regarding technological unemployment is as vital today as further refinement or production of labor-saving and comfort-giving devices. Unless we radically and fundamentally transform distribution of resources and labor, ML is gonna make it so that the owner class is gonna own even more and the wealth gaps are gonna widen. This is a good argument when we speak among each other and planning to storm the palace. As far as the owner class themselves are concerned, I’m pretty sure they’ve chalked this one up in the “pro” column rather than as a true danger of ML.

This is an issue I’ve been thinking about for all my life. Since I was like six years old. It’s what steered me leftward, too. I was like “OK if bots take over our jobs that’s gonna be good because we won’t have to work but it’s gonna be bad because how will we get money? Some people will own the bots but not everyone can do that and then people will starve and it’ll be a huge disaster.” Damn it. I wish I had been wrong about that.

It’s an argument that’s not entirely clear-cut because if it weren’t for market capitalism’s little exploitation issue, this would’ve been a good thing rather than a bad. Y’all know how much I hate the human compiler at work, hate having to do boring chores by hand that a bot could do. But it’s certainly destroyed my own life.

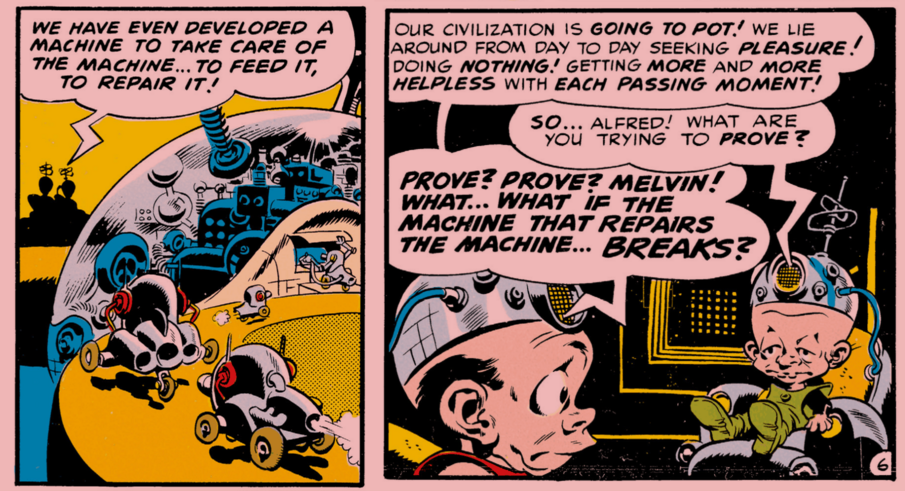

Automating things we used to do by hand is dangerous because soon enough we won’t be able to do those things.

This argument is a mixed bag for me. There are plenty of skills I can say “good riddance” to if AI takes over. But there is also a core of basic things—like critical thinking and puzzle-solving—that we need to retain.

This doesn’t apply to all AI apps because some models are self-hostable but like all remote apps, all cloud apps, there are all the traditional drawbacks of server-side hosted apps. We wanna use free software. I know that there is resource-use advantage to running some of the models centrally and sharing some of the load but it’s easy to get fooled into giving the corporations the power.

Surveillance, exploitation, a powerful tool in the hands of the mighty. Yeah. A good argument. New technology has historically facilitated theretofore unimaginable horrors.

The fake news is gonna get faker, and that includes fake news by state actors like Trump and Putin, and their dismissal of real news by labeling it as “fake”. Good argument, but just like the previous two arguments, talking isn’t gonna do much in the face of malicious actors. These tools are a wild chainsaw swung chaotically and they’re a precise scalpel wielded ruthlessly. Once they’re in the wrong hands it might be too late to talk about it.

If the argument is “the models are gonna come alive and feel bad”, then no. Not good argument. If the argument is “fools are going to mistakenly believe that the models are alive and give them too much responsibility or respect” then maybe. Yeah. Yes, I am afraid of that. That was my first fear; although arguably many of the other arguments I list here are even bigger issues.

Very good argument. Training these models use climate-wreckingly large amount of resources. Probably the best argument. The most legitimate reason to regulate them (even Hayek realizes that externalities need regulation) and the biggest drawback with them. It’s a good argument because it appeals to all quarters, and it’s true, and it’s the one of the biggest reasons to hate them.

This is something we absolutely need to fix before going forward. Climate change is urgent, real, and it’s here now. People who dismiss this issue need to sober up or they’ll have the friendliest chatbot on the cinder.

Good argument, but not all AI companies have been equal in this regard. Some ruthlessly ignore robots.txt and scrape and rescrape and bring dynamic websites to their knees. This is not something inherent to ML for all time but it’s certainly a very understandable reason to hate them now, especially specific corporations (looking especially angrily at you, Claude).

Great argument. Not only are the current implementations all “okay and do you want to know a secret about the thing I just said? It’s super juicy” conversation-prolongy, they’re also inherently an eerily good slot for the main two reasons people get hooked on socials: the loneliness/connection impulse, and the “random reward” slot machine kick since you never know what it’s gonna say.

And once you’re hooked to reveal all your secrets to it, here comes the ads.

Wow, yeah, I’ve read about some horrific cases where they have encouraged people actually dying. Not only those extreme cases but maybe it’s just something inherent to talking to them that’s a little bit crazy-making. Tentatively putting this as a good argument subject to moving to bad or to great depending on how widespread this eventually turns out to be.

Some people supposedly run unsupervised bots on the net. Yeah, this is messed up. Blackmail, doxing, made up rumors, all that stuff is a possible risk. I don’t know too much about the situation or the veracity but even if the reported cases turn out to have just been larpers, otherkin, or hoaxers—I’m not claiming real or unreal, I just have no way to know—it’s theoretically possible given the right event loop and since this here is a future-looking essay I want to give credence to things that might happen and I’m putting this one up as a good argument since that thought scares me more than amuses me.

Someone I don’t get along with very well wrote:

This person has no idea what machine learning actually is. And they hate such a generic concept on a “gut feeling” and come up with the reasons later?

If you want good reasons to hate AI generated art you won’t find them in this shitty blogpost.

I’ve got a degree in computational linguistics. I know how multi-layer vectorspace ANN works. But the implementation is not what the post is about.

It’s about how training a new model uses a lot of resources, which is true to a climate-wrecking degree. Then using those models is no worse than any other app, but we’re seeing a boom of new models and new training projects.

It’s about how a model is such a big investment that it’s not commonly found on every desk or in every garage. Instead, they are owned and controlled by big organizations, usually for-profit corporations. These tools are part of the centralization of wealth and power we’ve been seeing throughout the industrial age.

It’s also, tertiarily, about how these models can impact society, education, and art. But that’s something we’re still only speculating about.

It feels bad. It’s true that I started with the gut feeling first and reviewed the arguments after. I always say that if you aren’t feeling, you’re only using half your brain. But I then saw that some of the arguments that I would’ve wanted to rely on were pretty darn weak. And that other arguments, that I hadn’t even considered, were strong.

Humans have this natural bias to feel first, think later. That’s the best case scenario.

More common, unfortunately, is to just feel and then kid yourself into thinking that your emotions are reason. That’s not what I was doing. I wasn’t going “Oooh this AI art looks pretty nifty! What a great invention!” and stopped there.

Nor did I stop at “Ugh, it’s ugly” or “As an artist, this is super scary”. I kept thinking about it until I had thought it through. And some of my own initial gut feeling arguments, like “it’s ugly”, are among the bad arguments that I discarded.

Thinking about things from multiple perspectives and with all kindsa arguments before you make up your mind is generally a good idea.

If there are good arguments against ML and AI art that I’m missing, of course I want to add them, along with some of the most popular of the bad arguments, too, if I’m missing any there.

If…

Then I believe that ANN LLM is the right tool for many applications and can solve a slew of problems.

But those are four pretty big ifs. It’s the science fiction world I’m working towards but we’re not there yet and perhaps never will be. AI foist on us outside that context has worsened the absolute horrors of modern real life.